chatpack: How I Compressed 11 Million Tokens Down to 850K for Chat Analysis

Building production-grade distributed systems at the intersection of Rust, AI, and Web3. My passion is tackling the fundamental challenges of data consistency, security, and performance in decentralized environments. As the solo founder of Legacy, a blockchain-based document verification platform, I architect and engineer systems designed for cryptographic eternity and bulletproof reliability. Through my articles, I explore the trade-offs of architectural patterns and share battle-tested insights from building real-world systems. My goal is to deconstruct complex topics - from asynchronous transaction handling to API design - into clear, actionable principles. Always open to discussing system design, cryptography, and the future of the decentralized web.

From manual scripts to automation: A Rust CLI tool for preparing chat exports for LLM analysis

The Problem Nobody Notices

Gemini refused to analyze my chat. "Failed to generate content. Please try again." And I needed to understand what patterns exist in how our community communicates.

I was trying to analyze a private developer group at a programming school where I study. I needed to identify trends in how students communicate and what topics come up most often. I needed to identify trends: why do so few students make it to the end? What changed when the platform was updated? What advice did graduates give?

I exported the chat from Telegram. 34 thousand messages, three years of history. Opened result.json - 26 megabytes. The problem isn't the file size. The problem is that 80% of those 26 megabytes is noise: JSON brackets, "type": "message" keys on every line, metadata about stickers you don't need.

Before this, I solved similar tasks with scripts that searched for keywords to find relevant sections. I sorted by nicknames and dates. The most annoying part - everything had to be done manually, and some data got lost due to this crude initial filtering.

That's how chatpack was born.

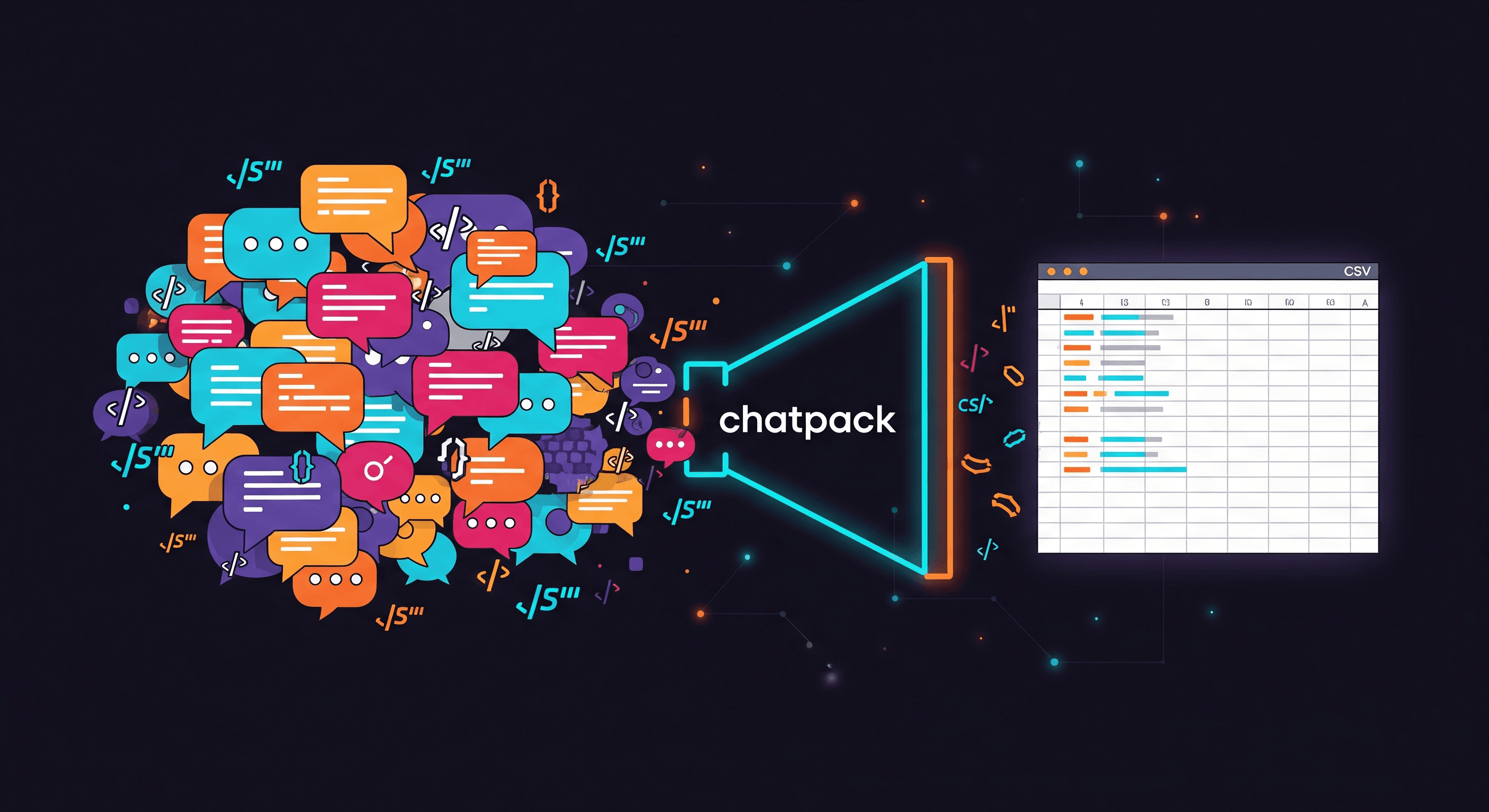

What chatpack Does

Telegram JSON (11M tokens)

↓

[chatpack]

↓

CSV (850K tokens) → LLM → Insights

One command:

chatpack tg result.json -o context.csv

Result:

Input: 11.2M tokens (raw Telegram JSON)

Output: 850K tokens (optimized CSV)

Compression: 13x

Now you can upload this CSV to LLM with an actual prompt:

Here's our team's chat history for 2023.

1. Identify the top 5 most discussed topics

2. Who was most active in each quarter?

3. Is there a correlation between time of day and message tone?

Supported Platforms

chatpack tg chat.json # Telegram

chatpack wa chat.txt # WhatsApp

chatpack ig message_1.json # Instagram

chatpack dc export.json # Discord

I started with Telegram work groups. Then I added other messengers - to automate my processes and identify common trends.

Why CSV Compresses Best

Comparison on real data (34K messages):

| Format | Tokens | Compression |

| Raw JSON | 11,177,258 | baseline |

| CSV | 849,915 | 13.2x |

| JSONL | 1,029,130 | 10.9x |

| JSON (clean) | 1,333,586 | 8.4x |

Why such a difference?

// JSON: each message is

{"sender": "Alice", "content": "Hello"}

// CSV: just data

Alice;Hello

JSON wastes tokens on:

Brackets

{}and[]Keys

"sender":,"content":- for every messageQuotes around every string

CSV uses ; as a delimiter (one character) and writes keys only in the header.

You can also work with CSV in Excel - calculate response times, average message length, build engagement charts.

Merge Consecutive: The Obvious Feature Everyone Ignores

A typical chat looks like this:

Alice: Hey

Alice: How are you?

Alice: Did you see the new project?

Bob: Yeah, I looked

Bob: Pretty good overall

After merge:

Alice: Hey

How are you?

Did you see the new project?

Bob: Yeah, I looked

Pretty good overall

Here's what the actual CSV output looks like:

# Without merge (5 rows, ~45 tokens)

Sender;Content

Alice;Hey

Alice;How are you?

Alice;Did you see the new project?

Bob;Yeah, I looked

Bob;Pretty good overall

# With merge (2 rows, ~35 tokens)

Sender;Content

Alice;Hey

How are you?

Did you see the new project?

Bob;Yeah, I looked

Pretty good overall

5 messages → 2 entries. On 34K messages, this gives 24% reduction before even choosing a format.

But the main benefit isn't tokens. Embedding a single complete thought is processed much better than 5 fragmented pieces of the same thought. For RAG pipelines, this is critical.

Anyone who has worked with large chats eventually arrives at merge. This isn't an insight - it's common knowledge among those who analyze conversations.

Why Not Just Use jq or a Python Script?

You could write jq '.messages[] | {from, text}', but that won't give you merge, date filters, or fix Mojibake in Instagram exports. chatpack handles all the edge cases so you don't have to.

Architecture: Why Traits, Not If-Else

When I wrote the Telegram parser, I built in extensibility from the start:

pub trait ChatParser: Send + Sync {

fn name(&self) -> &'static str;

fn parse(&self, file_path: &str) -> Result<Vec<InternalMessage>, Box<dyn Error>>;

}

Adding a new messenger - three steps:

// 1. Implement the parser

pub struct SignalParser;

impl ChatParser for SignalParser {

fn name(&self) -> &'static str { "Signal" }

fn parse(&self, path: &str) -> Result<Vec<InternalMessage>, _> {

// ...

}

}

// 2. Add to Source enum

pub enum Source { Telegram, WhatsApp, Instagram, Discord, Signal }

// 3. Add to factory

Source::Signal => Box::new(SignalParser::new())

This was a planned decision from day one. The Strategy pattern isn't overengineering when you know you'll be extending.

Practical Use Cases

Engagement Analysis

I needed to add an engagement metric - to identify when a person is most active and how this changed over the years.

chatpack tg group.json -t -o timeline.csv

The -t flag adds timestamps. Now the CSV has a date column for each message:

Timestamp;Sender;Content

2024-01-15 10:30:00;Alice;Good morning!

2024-01-15 10:31:00;Bob;Hey

Upload to Claude: "Build an activity chart by hour for each participant."

An LLM can't understand what someone is doing outside the chat. But general trends - when the platform changed, who was active during which period, how team mood shifted - it identifies perfectly.

HR Patterns

I use filters often when I need to identify patterns for a specific person:

chatpack tg chat.json --from "Ivan" -o ivan.csv

I get all messages from one participant. Then analyze:

Grammar

Humor style

Average message length

Activity times

It's like HR screening before team selection - just automated.

Project Archaeology

The most useful case for me personally:

chatpack tg chat.json --after 2022-01-01 --before 2023-01-01 -o year_2022.csv

I was looking for information about the old platform, former staff and students, advice from graduates about where they got jobs. The LLM handled the task, identifying what I needed. Over time, I formulated concrete ideas for improving the platform.

Technical Details

Performance

Speed: 20-50K messages/sec

Memory: ~3x file size (entire JSON in memory)

Ceiling: files up to 500MB comfortably, 1GB+ needs streaming (not implemented)

Why no streaming? I just needed a library that would solve my problem. Most of the time, message exports don't exceed 1 MB. I consciously chose not to overcomplicate things.

When chatpack Won't Help

Files >1GB - no streaming, will eat your RAM

Media context needed - photos, voice messages are ignored, text only

Real-time analysis - this is a batch tool

Preserving exact JSON structure - chatpack normalizes everything to a flat format

Why Rust

Rust is currently my main stack. I used it because the project fits the criteria:

CLI tool - Rust is ideal

Need speed - check

Need reliability - types prevent mistakes

Thanks to this project, I also learned how to work with WASM and built a web version.

Handling Edge Cases

WhatsApp exports dates in different formats depending on locale:

[1/15/24, 10:30 AM] // US

[15.01.24, 10:30] // EU

26.10.2025, 20:40 - // RU

chatpack auto-detects the format from the first 20 lines and parses correctly.

Instagram stores UTF-8 text as ISO-8859-1 (Mojibake). "Привет" becomes "Привет". The parser fixes this automatically.

WASM: chatpack in the Browser

Not everyone wants to install a CLI. Some people just need to convert one file without touching the terminal. That's why there's chatpack.berektassuly.com.

The workflow is simple:

Drag & drop your export file

Choose output format

Download the result

The key point: your file never leaves your machine. Everything is processed locally in the browser via WebAssembly. No server, no uploads, no privacy concerns.

Repository: github.com/Berektassuly/chatpack-web

Installation and Usage

Pre-built Binaries

Download for your platform from GitHub Releases:

Windows (x64)

macOS (Intel and Apple Silicon)

Linux (x64)

Via Cargo

cargo install chatpack

As a Library

[dependencies]

chatpack = "0.3"

use chatpack::prelude::*;

let parser = create_parser(Source::Telegram);

let messages = parser.parse("chat.json")?;

let merged = merge_consecutive(messages);

write_csv(&merged, "output.csv", &OutputConfig::new())?;

Conclusion

chatpack is a tool I wrote for myself. The problem was specific: analyze chat history through an LLM. The solution turned out to be universal.

If I were starting today, I'd keep the development process the same:

Telegram parser

Trait for extensibility

Other messengers as needed

WASM for those who find CLI overkill

If anyone needs it - feel free to use it in your project. If there are issues, I'll listen to what users need and fix it.

Links:

GitHub: github.com/berektassuly/chatpack

WASM repo: github.com/Berektassuly/chatpack-web

Author: Mukhammedali Berektassuly